Key Features

Introduction

The Razer QA Co-AI combines intelligent automation with real-time analytics to streamline your game testing workflow. Its powerful features help QA teams capture, track, and analyze gameplay issues effortlessly. From bug detection to performance monitoring: all in one unified platform.

Dashboard

The Razer QA Co-AI web dashboard is your main interface for managing test sessions, viewing bugs, triggering manual captures, and pushing issues to Jira.

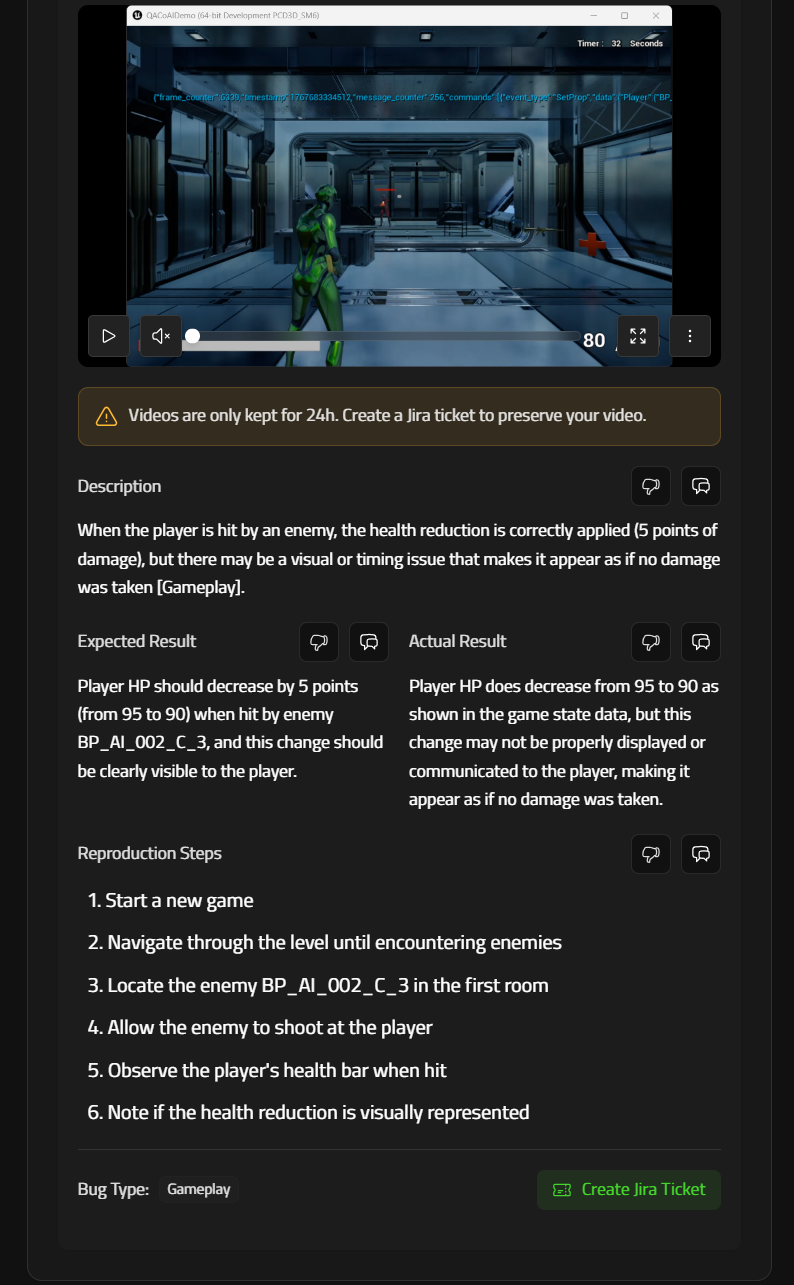

Report & Bug Overview

Each game session automatically creates a report, listed chronologically on the dashboard.

Within each report:

- Rename the report

- View the list of bugs

- Manually trigger a bug

- Edit bug metadata (title, severity, description)

- Replay video or annotable screenshot (valid for 24h)

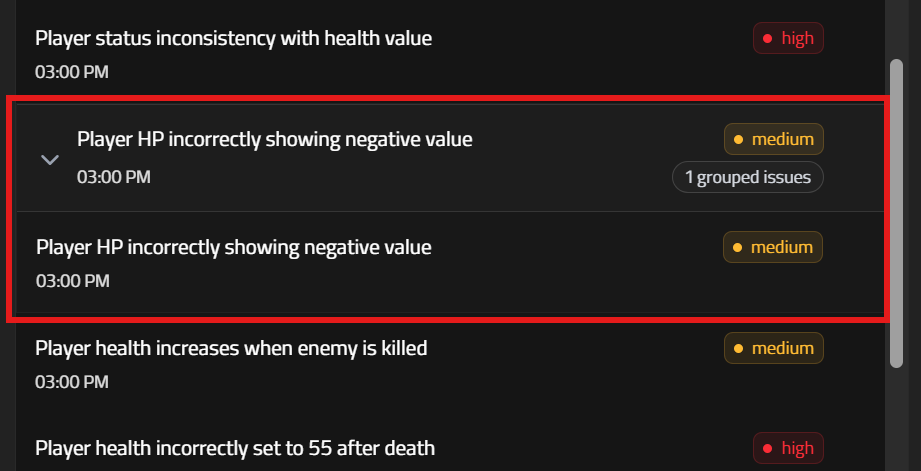

- Bug grouping by similarity Razer QA Co-AI automatically detects and groups similar bug reports, helping testers avoid duplicates and focus on what really matters. By clustering related issues, the tool keeps your reports organized and makes it easier to identify recurring problems, saving time and improving collaboration across your QA team.

Settings & Utilities

From the General Settings tab, you can:

- Edit project settings

- Re-download service.conf

- View your Razer user ID and authentication token

- Connect Jira and manage integration

- Manage cookies and Hotjar tracking preferences

- Access useful links

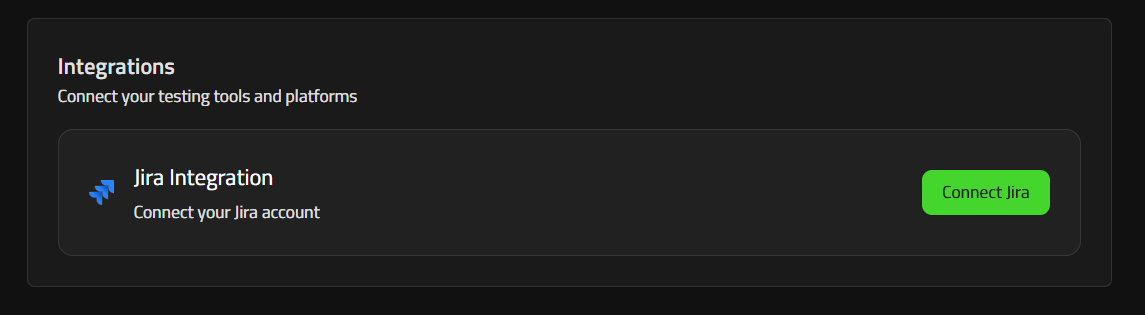

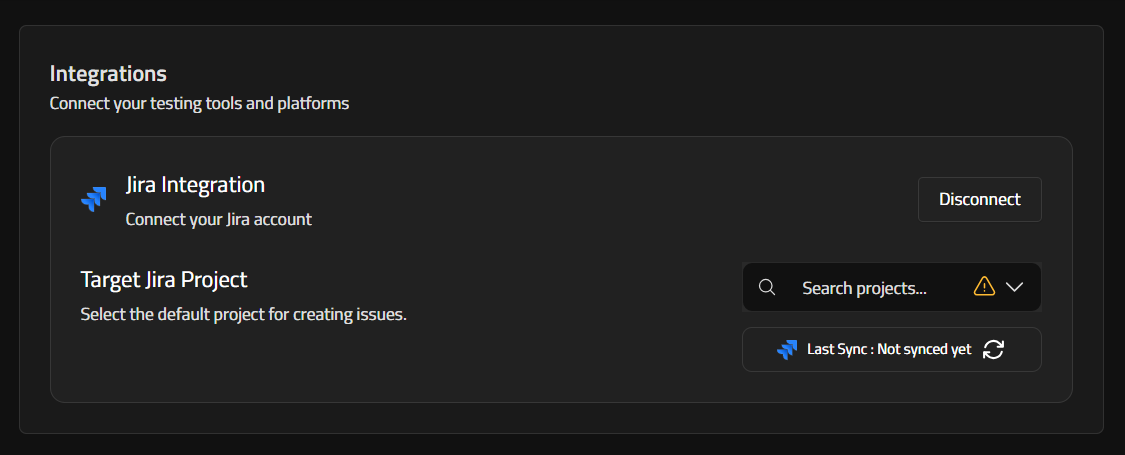

Jira Integration

To streamline issue tracking, Razer QA Co-AI allows direct Jira integration.

Setup

- Go to settings in the top right corner.

- Select Integrations page

- Connect your Jira account

- After Jira connection is successful, choose which Jira project you want tickets be created at.

Usage

From any valid bug (with a video or annotable screenshot under 24h):

- Click “Create Jira Issue”

- Fields auto-filled: title, description, severity, reproduction steps

- Video or screenshot is attached if still available

- Expired videos and screenshots are omitted automatically.

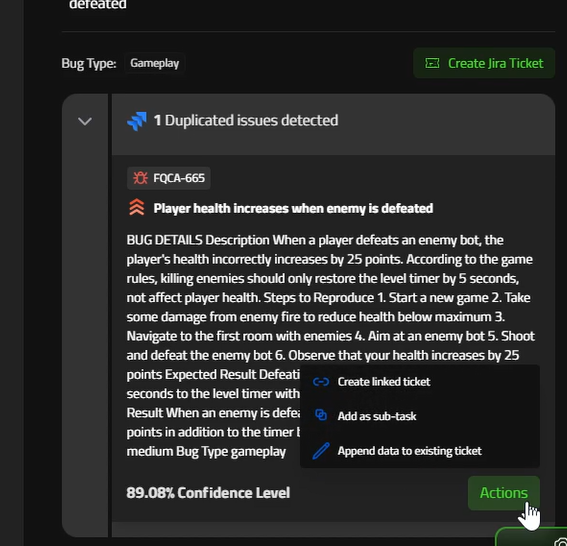

Duplicated bug detection

Our system leverages AI language analysis to verify your tickets within your Jira Project, intelligently identifying duplicate bug reports across projects. This reduces noise, streamlines triage, and improves prioritization by surfacing recurring issues and linking related reports with contextual awareness.

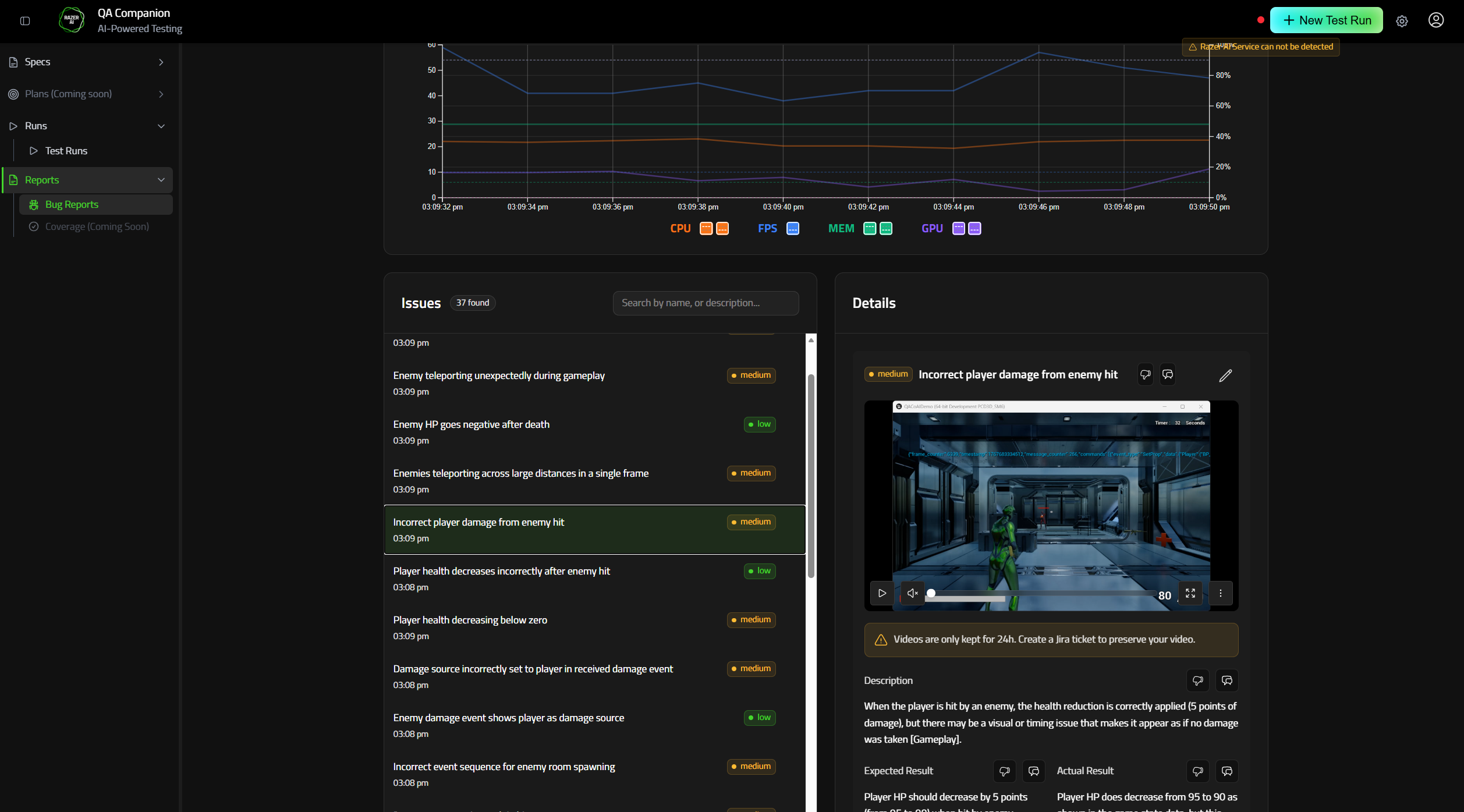

Performance Tracking

Performance Tracking lets you monitor your game’s FPS, CPU usage, GPU usage, and Memory consumption in real-time while a QA session is running.

When any metric breaches a user-defined threshold, the Companion automatically raises a “Performance Issue” bug entry, tags the exact timestamp, and pins a red dot on the live graph so you can correlate slow-downs with in-game events.

Why it matters

- Catch hard-to-reproduce frame-rate drops early

- Surface hidden GPU/CPU spikes in complex scenes

- Produce objective data for optimization passes

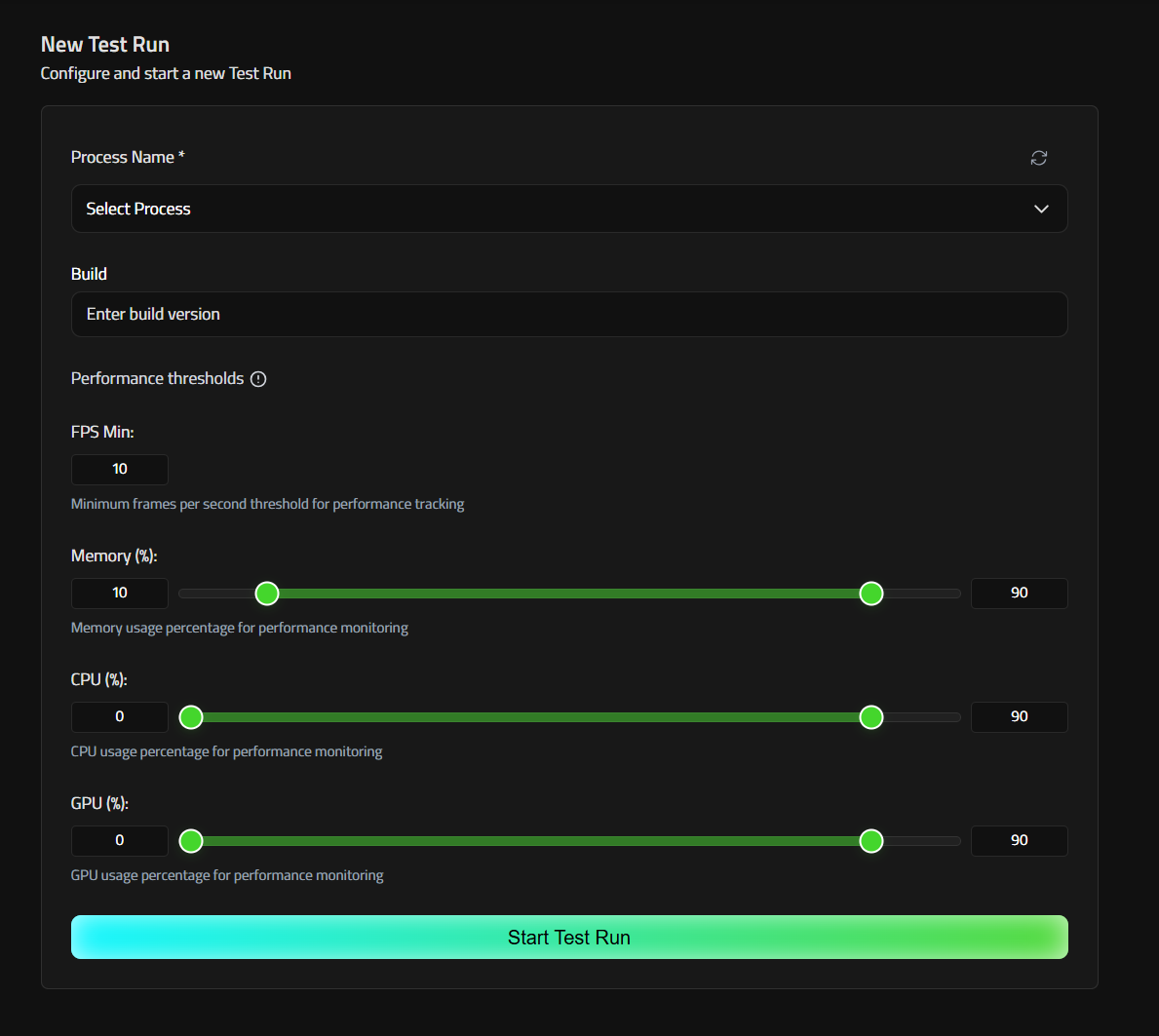

Starting a Session with Metrics Enabled

- Open the web application and click Start a new QA session.

- Select and fill in the usual fields:

- Process Name – Search or select the executable to track (e.g. MyGame).

- Build – internal build tag (max 20 chars).

- In the Performance thresholds panel (see screenshot ①):

- FPS Min – frames-per-second floor (default 30). Drag the sliders or type exact percentages for upper and lower bound thresholds:

- Memory % (0–100)

- CPU % (0–100)

- GPU % (0–100)

- Click Start new QA session.

- Performance metrics will be tracked and performance issues automatically reported.

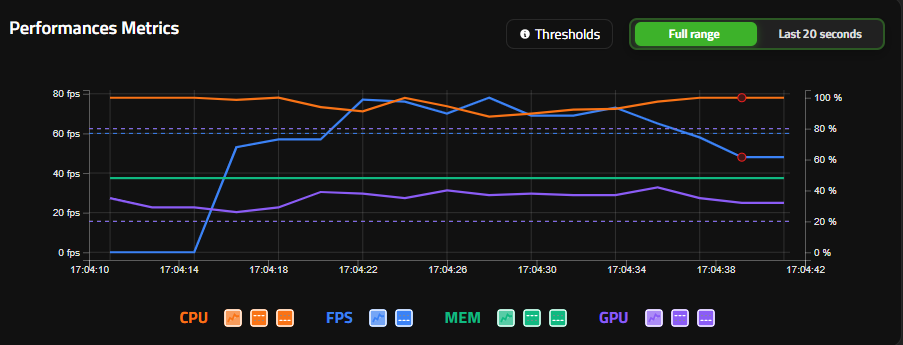

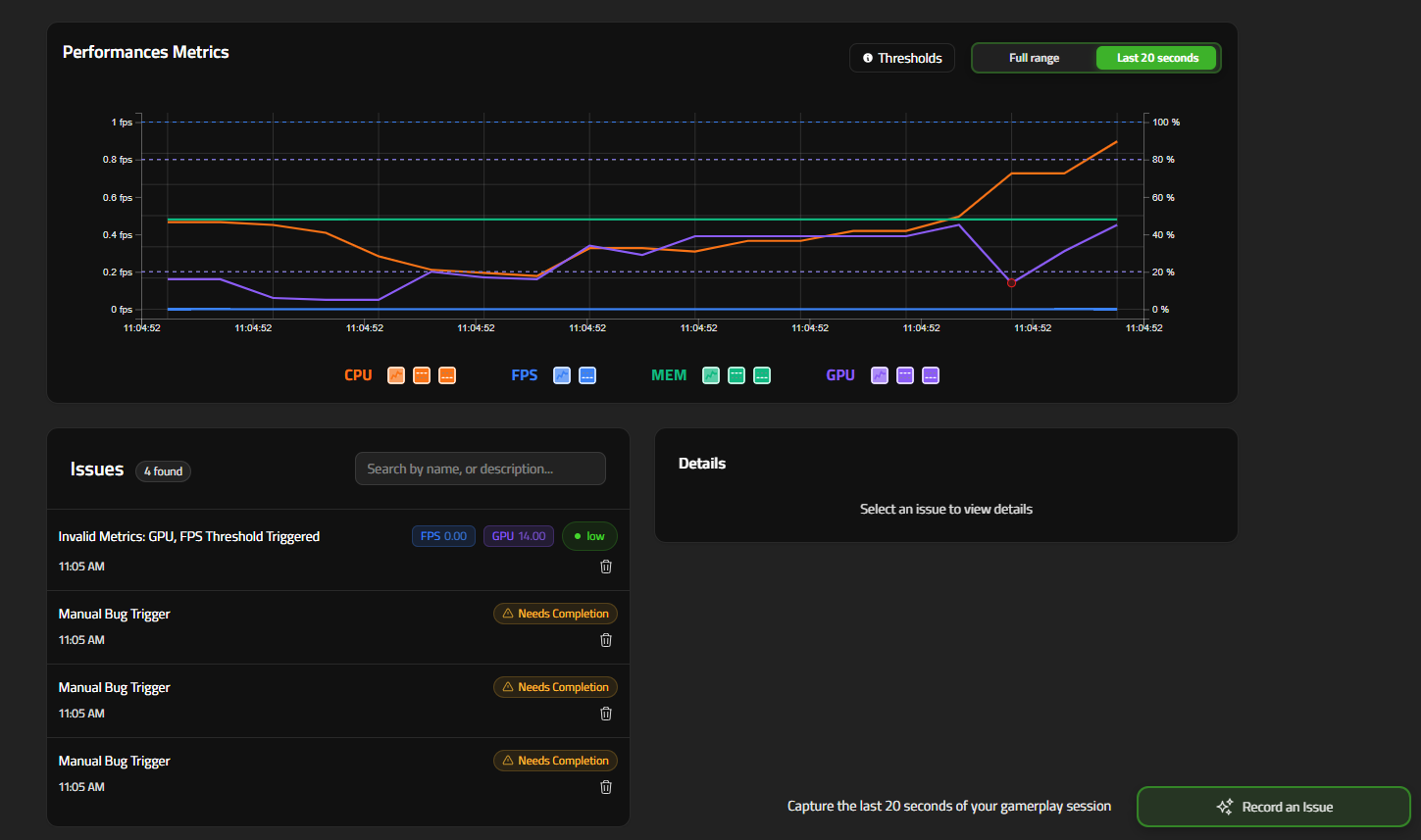

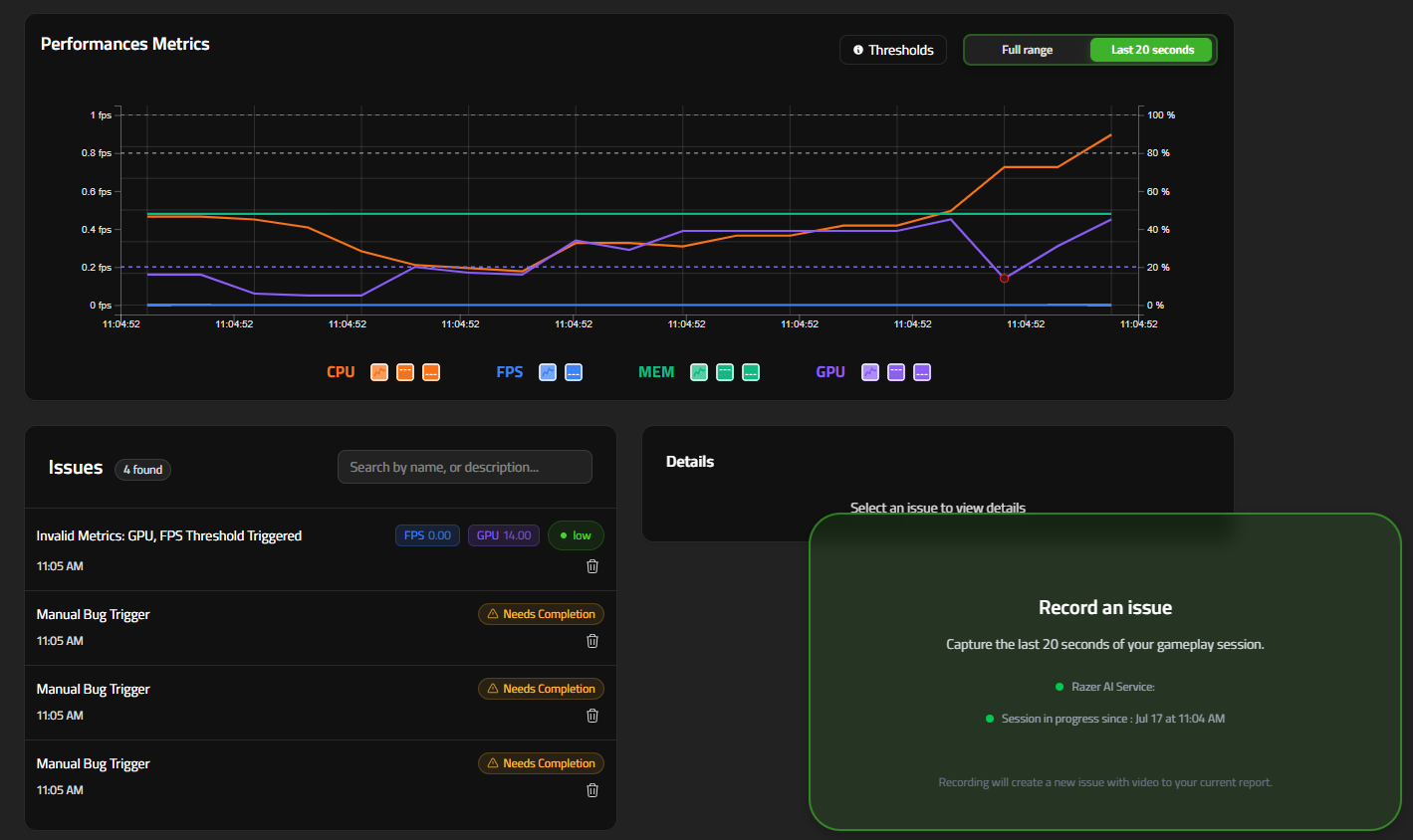

Live Graph & Threshold Indicators

During the session the Performance Metrics graph appears in the Report view:

| Legend | Description |

|---|---|

| Orange line (CPU) | CPU utilization %. |

| Blue line (FPS) | Frames-per-second. |

| Green line (MEM) | System memory utilization %. |

| Purple line (GPU) | GPU utilization %. |

| Red dots | Timestamp when metrics breached thresholds – a “Performance Issue” bug is auto-logged. Checked every 30 seconds. |

Use the Last 20 seconds / Full range toggle to zoom, or hover any point to see exact values and compare against your limits.

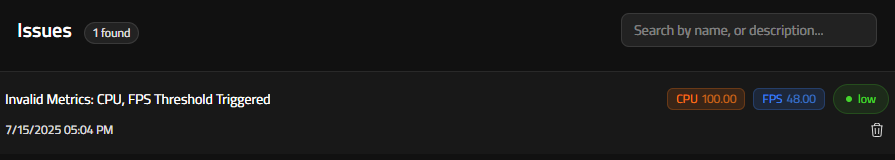

Reviewing the Auto-Generated Performance Issues

Each Red dot set creates a bug issue entry:

- Scroll down to the Issues section inside the current report.

- Entries of type Invalid Metrics list:

- Timestamp & metric(s) threshold triggered.

- Snapshot of metric values at that instance.

- 20 s gameplay video (same capture window as standard bugs).

- Edit severity/description if needed.

Manual bug report

The Manual Bug Report feature lets testers capture and document bugs on demand, ensuring that every detail—visual or contextual—is recorded accurately.

Usage

To manually report a bug using Razer QA Co-AI:

- In the dashboard, select the currently open report.

- Reproduce the bug you want to capture.

- Trigger the report using one of the following methods:

- Click the Screenshot or Video button located at the bottom right of the interface.

- Use the designated hotkeys:

- Ctrl + B for a Screenshot

- Ctrl + Shift + B for a Video After a few seconds, the new bug will appear in the bug list, automatically linked to the active report. Screenshots can be edited using the in-app annotation interface, allowing you to highlight and comment on specific areas before submission.

After a few seconds, the new bug will appear in the bug list, automatically linked to the active report.

Screenshots can be edited using the in-app annotation interface, allowing you to highlight and comment on specific areas before submission.

Image and Video storage expiration

Bug-related screenshots and videos are available for 24 hours after capture. After this period:

- Screenshots will no longer be accessible.

- Videos will no longer be playable.

- Jira exports will exclude the screenshot and video content.

- Bug metadata (such as title, description, and tags) will remain available.